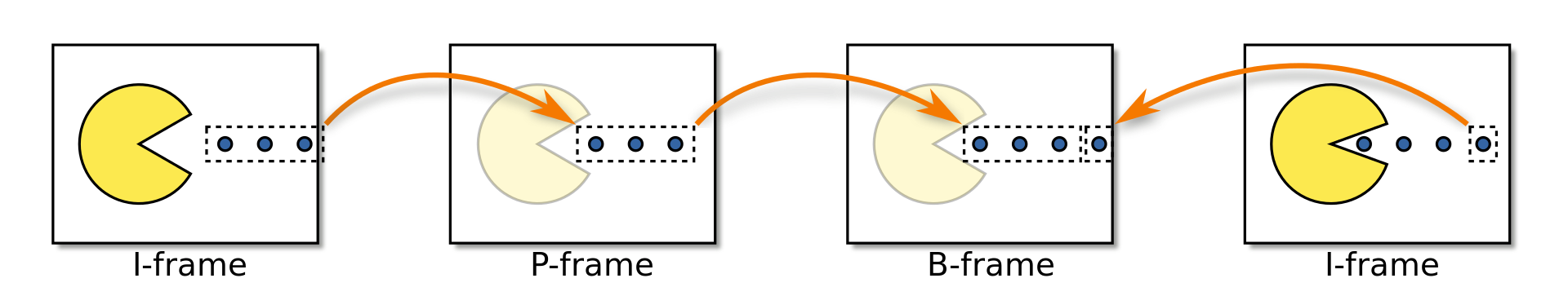

Frame Types, The I, P & B's of video encoding

A very important aspect to video encoding lies in the type of frames that gets used. This will just be a brief and basic explanation of the different types, and how they work. This will mostly be based around H.264, but has some use outside it as well.

I-Frames (I)

I-Frames, also known as Intra-coded picture, some times called keyframes, although this is quite inaccurate, more on that later. This is the most basic frame. You can think of it as just a standalone image (like a jpg).

They have the least amount of compression, as they have to be able to stand by themselves, and can't rely on others for help (reference), to fill in the "blanks" like the later types can.

If one wanted, one could use exclusively this frame type if one was only after the highest quality, and none of the compression. It wont be optimal for most scenarios though, and is not all that common except for some niche scenarios, like raw footage from a camera.

The term keyframe, should exclusively be used when we're talking about a special kind of I-frame, called IDR-frame.

Keyframes / IDR Frames (IDR)

Let me preface this by saying that there are scenarios where dont use the traditional keyframes, but instead rely on SEI recovery points (NAL access units), but I wont mention them here, as I feel its use is quite rare, and I also dont know enough about it to talk about it it properly, and I barely feel comfortable talking about this in the first place.

We need to make mention of IDR frames. These are a bit special, and is a specific type of I-frame. IDR is short for "Instantaneous Decoder Refresh", and they have some special properties.

When we talk about GOP (group of pictures), we have lots of different frame types within the group, but all the "GOPs" starts/ends with a special kind of I-frame, called IDR.

Not all I-frames have to be IDR-frame, but all IDR-frames are I-frames. This is an important distinction.

IDR frames informs that no frames prior (to the IDR) is to be referenced. This makes it easier for the algorithms to do their job, as they can now exclude everything prior to the IDR. This help players/decoders when scrubbing (moving the playhead). It can help encoders and other systems work faster and more efficient as well. I believe HLS/dash segmenters also rely on the IDR frames to know when to split/segment.

One must take extra care when it comes to IDR frames, and it's better to stay cautious when considering changes, as misconfiguration here can be catastrophic. This is especially true for a lot of live streaming platforms, as the rely on IDR frames to segment, and to make sure switching between transcodes is nice and smooth.

P-Frames (P)

Also known as a "Predicted Picture". This is where we start to save bits, as this frame type can reference a previous frame. They can not only reference previous frame data but also motion.

When I say reference the motion of other frames, what I mean is the if we have a bird flying across our frame, the P-frame can look at previous frames and tell the pixels to move in the correct direction, which again saves on data (bits). We wont dive into motion vectors and algorithms here though, that's for a different post (if I ever get around to it).

B-Frames (B)

This frame type can save even more bits than P, as it can not only reference previous frames, but also following (future) frames, hence its name "Bidirectional Predicted Picture".

Quite similar to P-frames, with the addition of being able to also look to the future for references. I like to think of them as time traveling frames, seeing as they can look into the "future", and even make predictions about future motion. Certainly the coolest frame type in my opinion, and certainly the most complex. This flexibility also means that b-frames has the possibility of providing the highest level of compression, but also the highest encoding cost (time to complete).

Closing words

I hope this gave you a bit more insight into frame types. This is certainly base knowledge we need to have before we get more into GOP, macroblocks, quantization and frame type decisions.

Couple of things I would like to mention.

The inter frames (P and B) can with modern codecs also reference each other, but this was not always the case with the older ones.

There are a certain limitations of inter frames depending on the devices playing back the video and the profile used for the encoder. You usually cant just do one I-frame, and then jam in 249 b-frames in a row, and expect everything to be fine.

More advanced usage of inter frames often also come with a significant cost to compute (more work) and latency, as we need more time and latency to do all of these fancy things, like time travel ;)

This was quite basic, and I encourage you to read more about it if you're interested.

Reminder, you can always reach out to me if you spot an error, inconsistency or poorly written/explained concept or topic. Contact form is here